IBC - 2. LED, Texture, Unity, Art, Robby

The IBC Show is the occasion to review the current themes in cinema technology, and to peek into the future.

This post continues my impressions of some of the current and future cinema technology recently presented at the IBC trade show in Amsterdam. My previous post discussed HDR, Full Frame, Red Hydrogen, the Netflix Effect, Direct View, presentations by Geoff Boyle, NSC, and Bill Bennett, ASC, and TrueMotion.

+++

PART TWO

1. LED Smart Light

2. Texture Equaliser

3. VFX in Real Time

4. VR

5. HDR Art

6. Robby Muller Film

7. ASC Cruise

+++

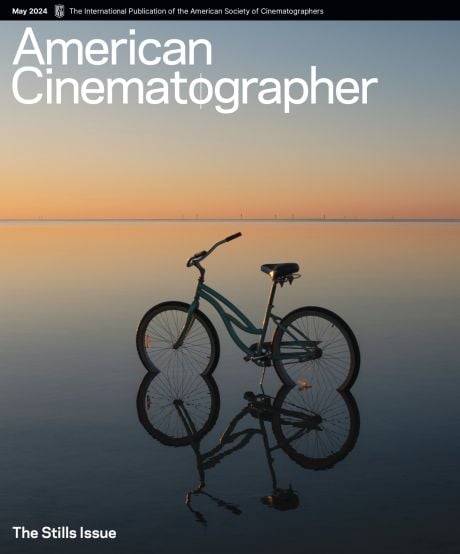

I begin with these photos of the gentleman making tiny Dutch pancakes outside the main entrance to IBC. Is it just me or does his grill look like a sensor with photosites? Do you see how each well has more or less oil - sort of like light filling photosites? You don't? ... Never mind :)

+++

1. LED Smart Light

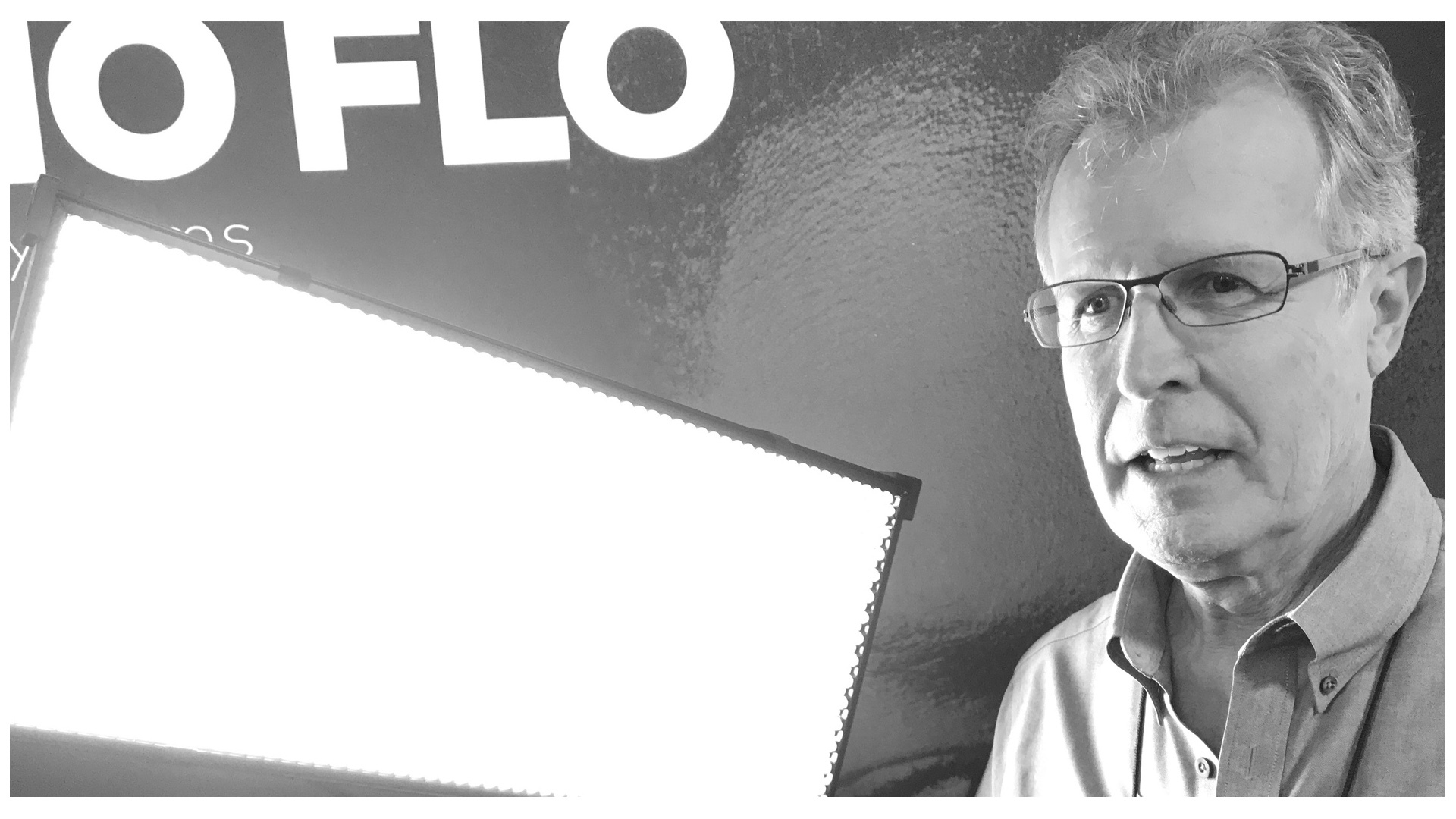

For me, the maturity of LED lighting is demonstrated by the profusion of LED products at companies traditionally defined by other sources, like K5600 Lighting (known for HMIs) and Kino Flo (pioneers of fluorescents).

+++

One of the revolutionary aspects of LED lighting is a wide color range which is not available in other types of sources, and which can be precisely and remotely controlled by computer via a DMX signal. This is especially true of LED sources with 4 or 5 primaries, say amber and/or white in addition to RGB, which add more finesse to the color range.

We are entering the era of the smart light. Among its many potential functions, a smart light should be able to adjust itself to match its environment, or to match other lights, or the camera type.

Kino Flo’s founder Frieder Hochheim demonstrated his familiar tube sources re-purposed with LEDs inside. Frieder said that Kino Flo has begun to measure and model the color rendition of professional video cameras from Arri, Panasonic, Panavision and Sony. In the future, cinematographers will be able to select the white setting of their Kino Flo sources to suit their camera model, with the additional option of tweaking the Magenta/Green value.

+++

My friend Marc Galerne gave a private demo to Stephen Pizzello and I of prototypes of new LED fixtures by K5600 Lighting. For now, we are sworn to secrecy, but suffice it to say that the manufacturer’s first LED products are promising indeed.

+++

2. Texture Equalizer

DI grading tools presently extend beyond color and contrast control of geometric windows to individual pixels, and even frequency components of the image.

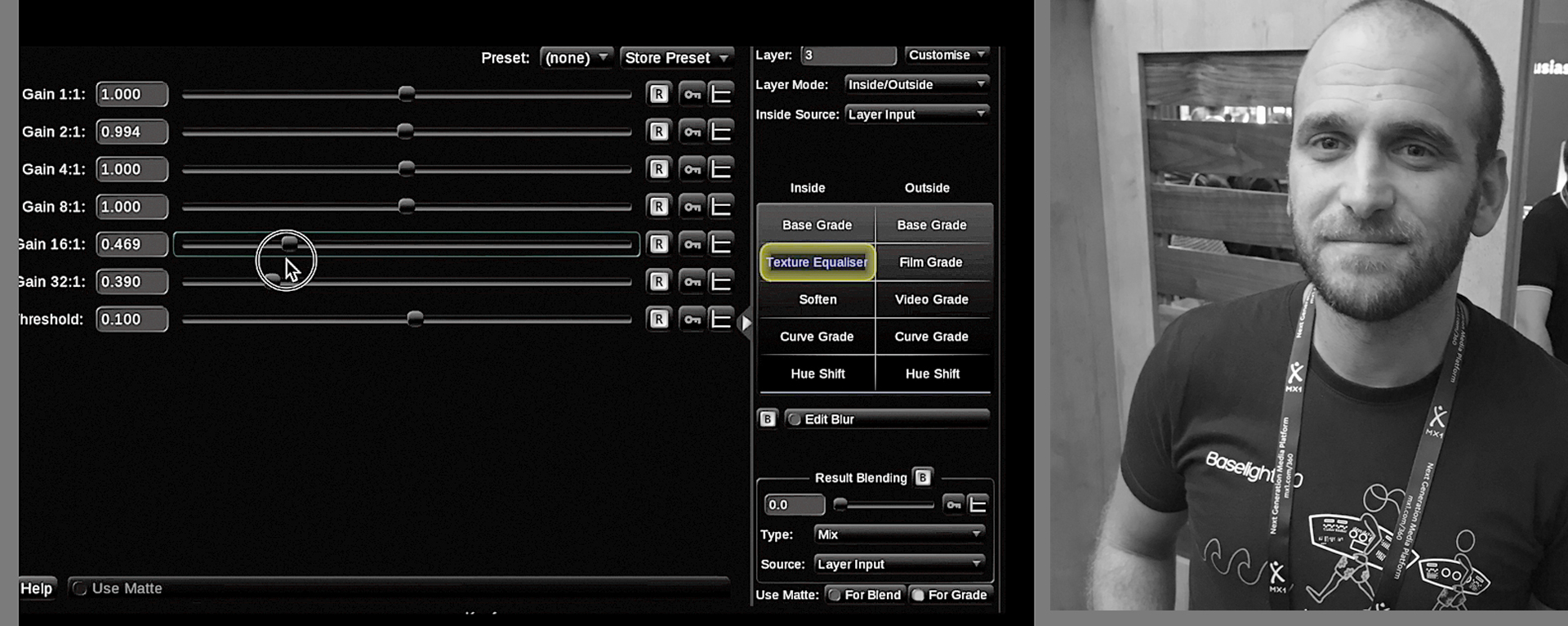

Daniele Siragusano from Filmlight gave me a demo of some cool features of Baselight. The alpha channel per pixel eliminates need for an alpha channel for the entire image. I was impressed by the Texture Equalizer, which breaks up the image into frequency bands, like an audio equalizer does with sound. This allows you to apply image controls like softening or color to selected high, medium or low frequencies in the image. You can also apply Texture Highlights to soften clipping HDR artifacts targeting only the highlights of the image.

+++

3. VFX in Real Time

Adam Myhill from Unity and VFX consultant Habib Zargarpour gave a presentation of possible future workflows for VFX.

Traditionally VFX camera motion is created by designing a set of keyframes and then interpolating between them, a rendering process that takes a lot of time and computer power. As an example, Adam stated that each frame of James Cameron's Avatar took an average of 90 hours to render.

One of the problems with present VFX workflows is that if a filmmaker wants to change an element of the finished scene, for example to change the lighting, the entire scene has to be rendered anew.

Adam demonstrated very fast changes of HD images using Unity software on his laptop, for example lowering the position of the sun source and instantly changing all the shadows in the image. He stressed that Unity's fast rendering enables filmmakers to work much more freely as they design and modify VFX shots. "Filmmakers come to Unity to save render time, but stay for the freedom it gives them."

Adam acknowledged that the quality of the images he was demonstrating were not good enough for features yet, but that they had been used on television series.

+++

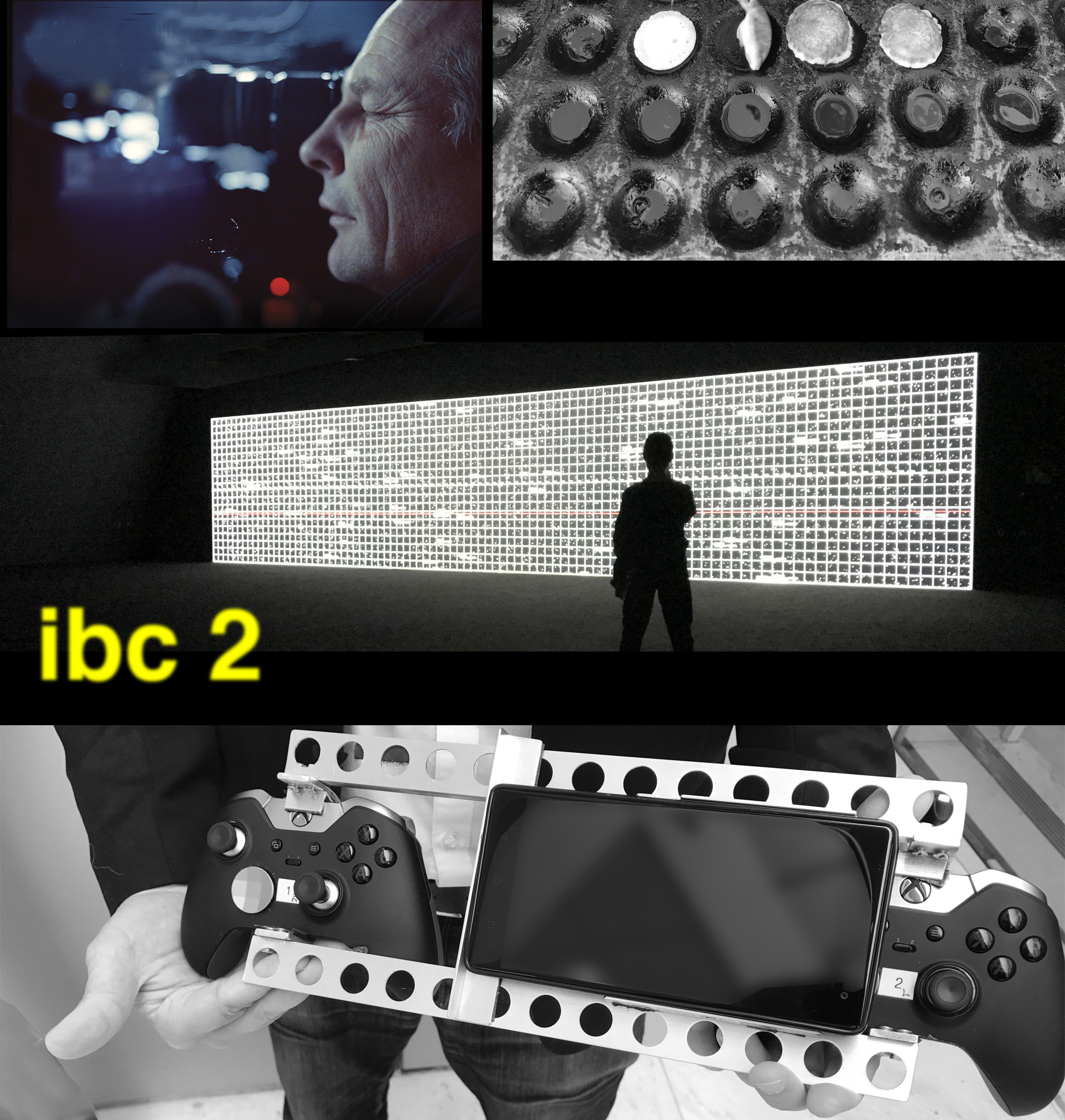

Habib gave a presentation with a custom-made virtual camera rig, using Xbox Elite controllers and a Lenovo Phab 2 Pro with Google’s now-discontinued Tango system. He used this virtual camera to demonstrate "shooting" and designing VFX moves in real-time. He uses the hardware in conjunction with Unity and the Expozure module.

Habib goes on film sets and hands the virtual camera rig to directors like Jon Favreau (on The Jungle Book), Steven Spielberg (on Ready Player One) and Denis Villeneuve (on Blade Runner 2049), allowing them to design and shoot virtual sequences themselves in real-time, and then quickly modify the sequence on set. Habib proved his point by demonstrating the real-time process of shooting a space ship fly-by with footage from Blade Runner 2049.

+++

4. VR

VR filmmaker Jannicke Mikkelsen, FNF, presented Lunar Window an interactive installation that she created for the Moon Landing anniversary at the Kennedy Space Center.

Jannicke gave a live demonstration of interactive footage using the wonderful HTC Vive system. She showed imagery of the lunar landscape on the screen adapting to her position as she moved across the IBC stage. I spoke with Jannicke and her cinematographer friend Nina Badoux after the panel.

+++

5. Cinematic Art

I believe that cinematography is not just a craft, but also an art form. So it seems natural to me that a growing number of contemporary artists are using cinema technology. (Indeed I have created art installations myself using video and DMX lighting).

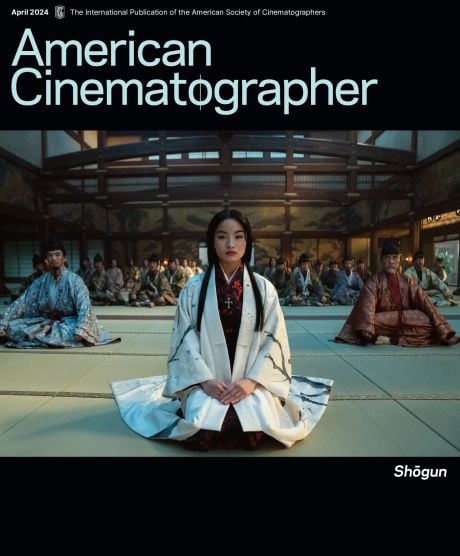

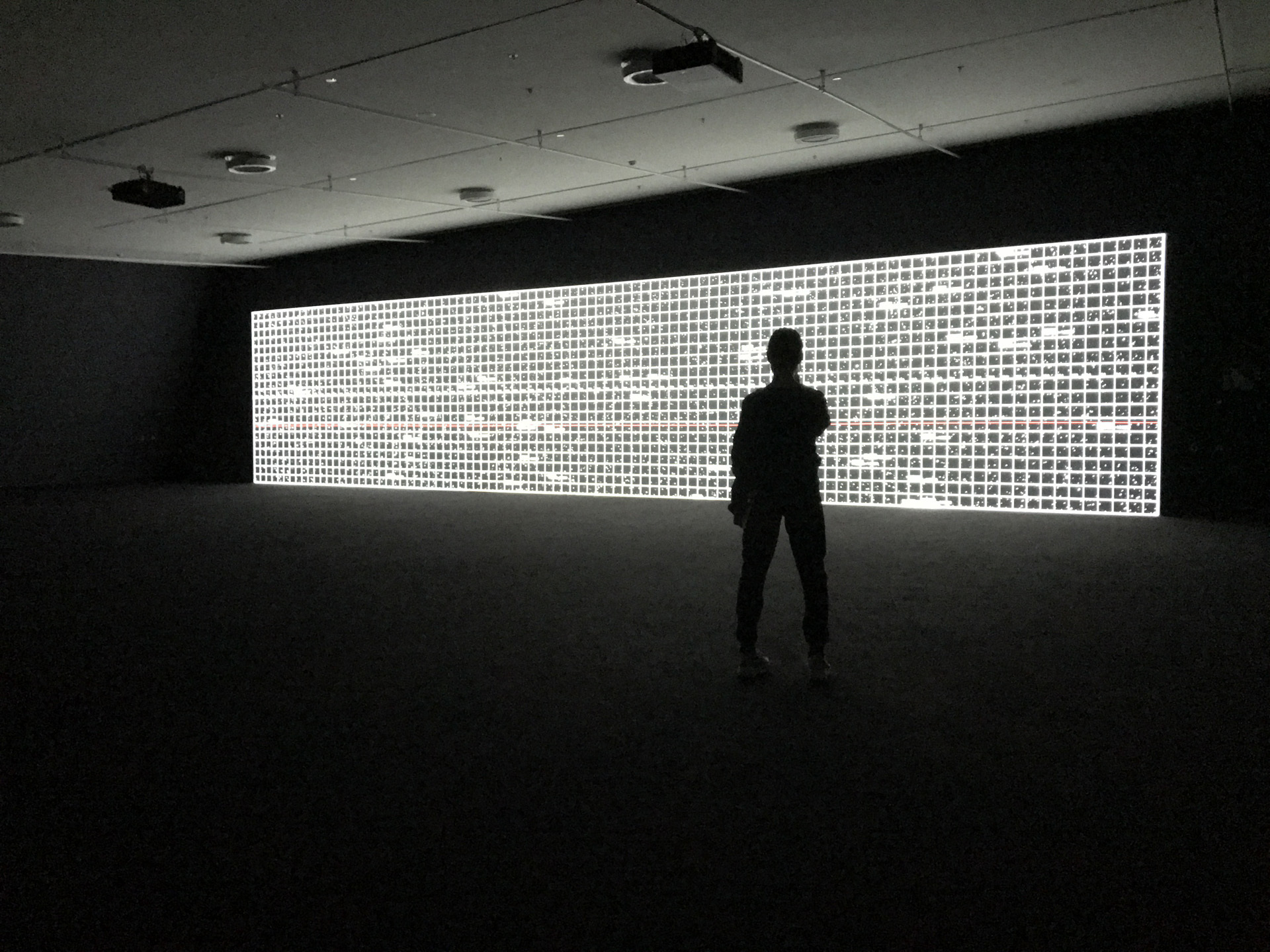

One of the high points of my Amsterdam experience was the amazing exhibit at the Eye Filmmuseum by media artist/composer Kyoji Ikeda. To me, the radar is Ikeda's masterpiece, a giant screen in a black space that evokes the constant scanning of the night sky with abstract geometric and data imagery. the radar's ultra-wide 4.8:1 aspect ratio (5760 x 1200 pixels) is created by 3 overlapping Panasonic DLP projectors, on a screen roughly 50 feet wide. At that width Ikeda's "art movie" becomes an environment.

The rhythmic imagery and electronic sound of the radar create an extraordinarily poetic, ambient environment that evokes our search for meaning through data.

Ikeda's installation is part of a growing number of contemporary artists using cinema technology. As I sat against the black wall bathed in swaths of Ikeda's light, it occurred to me that HDR would be very well suited to modulate powerful art installations like this one. The exhibition ends on December 2nd.

+++

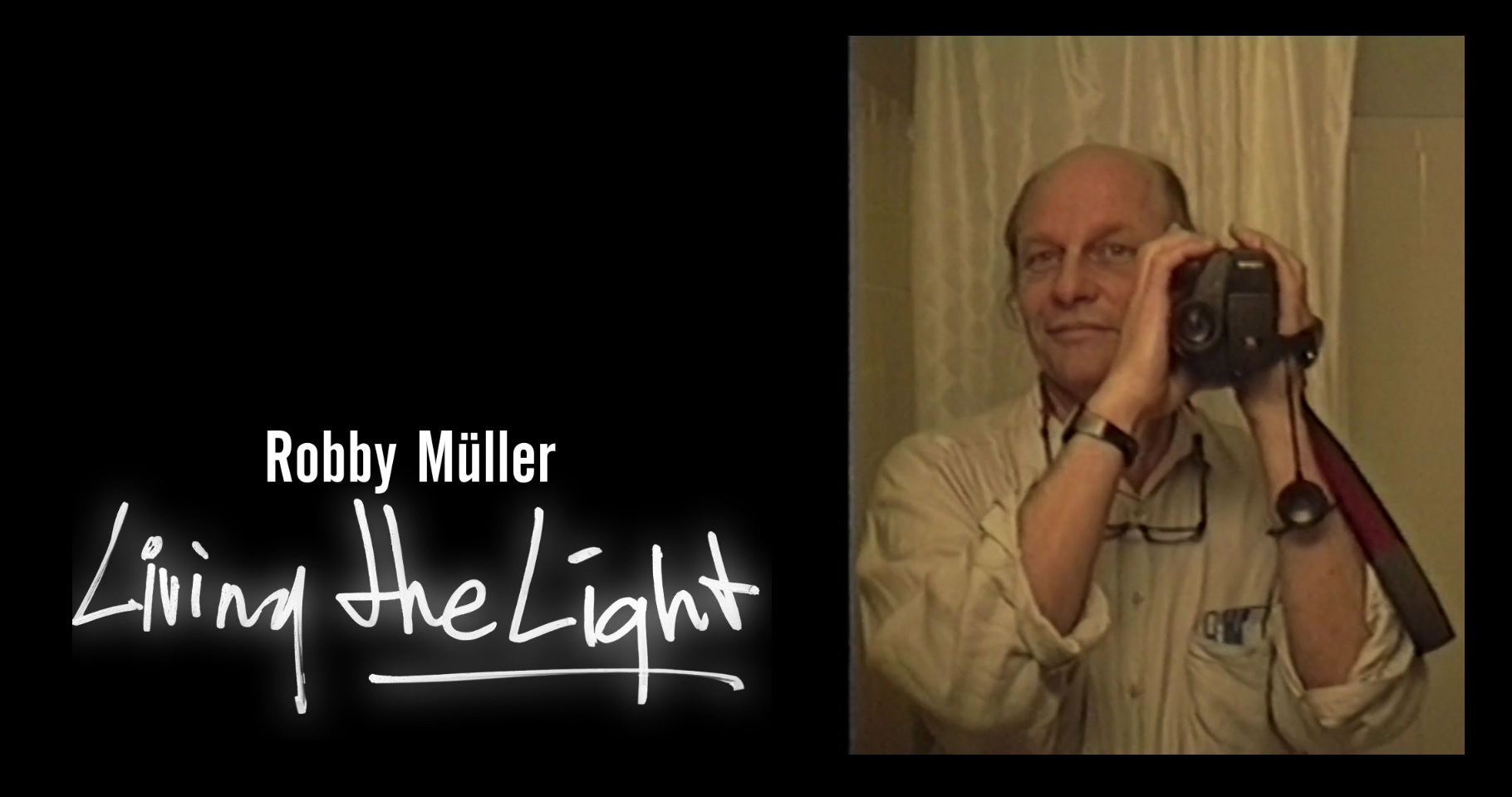

6. Living the Light

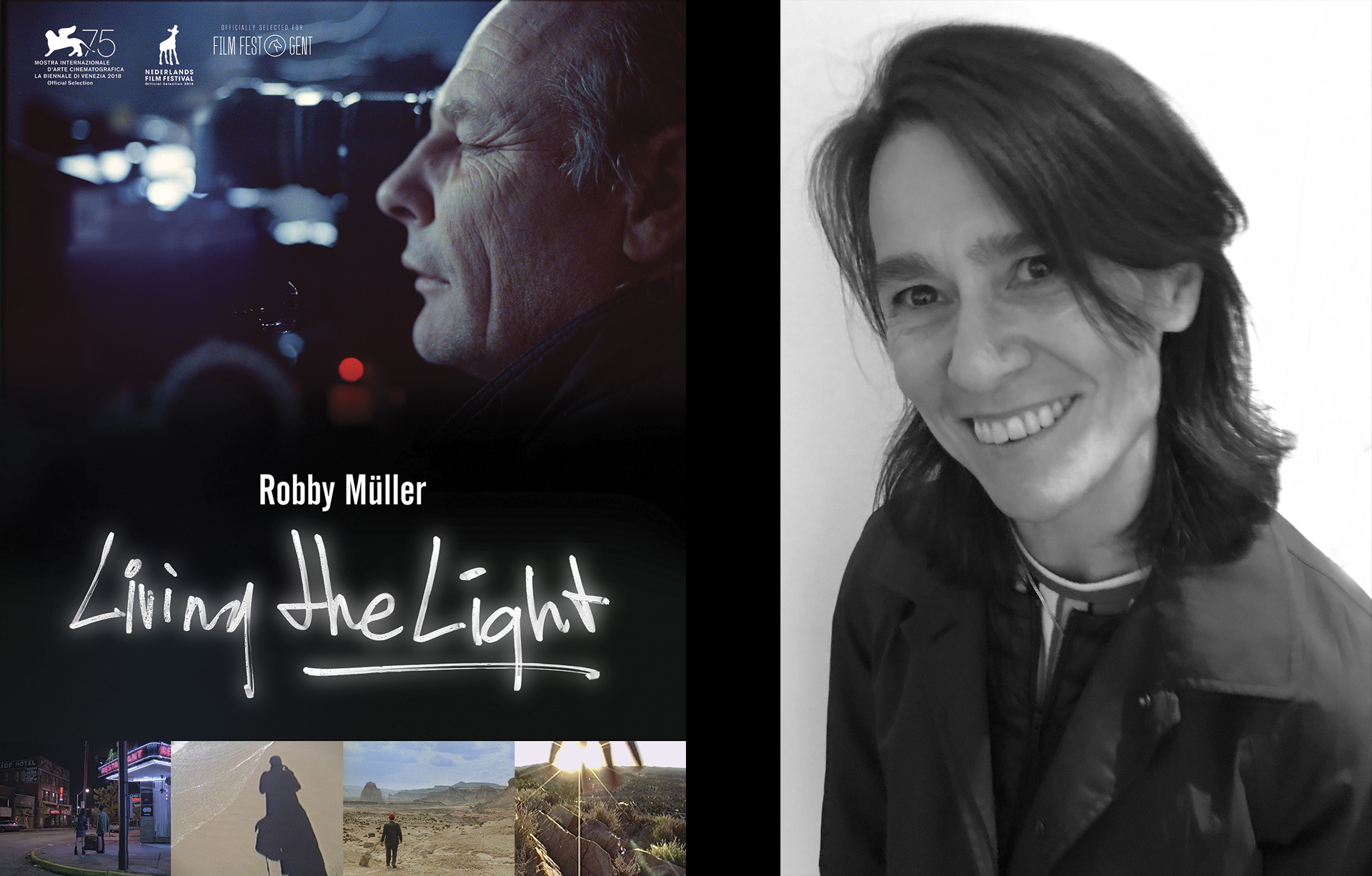

The Eye Filmmuseum provided another high point of my Amsterdam visit. Director Claire Pijman, NSC, held the Dutch premiere of her documentary, Living the Light, about the recently departed cinematographer Robby Müller. Claire has created a beautifully poetic movie that artfully combines footage from Robby Müller's Hi8 video diaries with footage from some of his iconic features, and comments by collaborators Wim Wenders, Lars von Trier and Jim Jarmusch, who also contributed to the soundtrack.

Claire succeeds in presenting Müller's footage in a way that invites the viewer to put himself or herself in the shooter's shoes... and soul. You are not just watching Robby Müller's video sketches and diaries, you are imagining what he's feeling as he's filming them. Living the Light is a wonderful, intimate homage to a great cinematographer.

+++

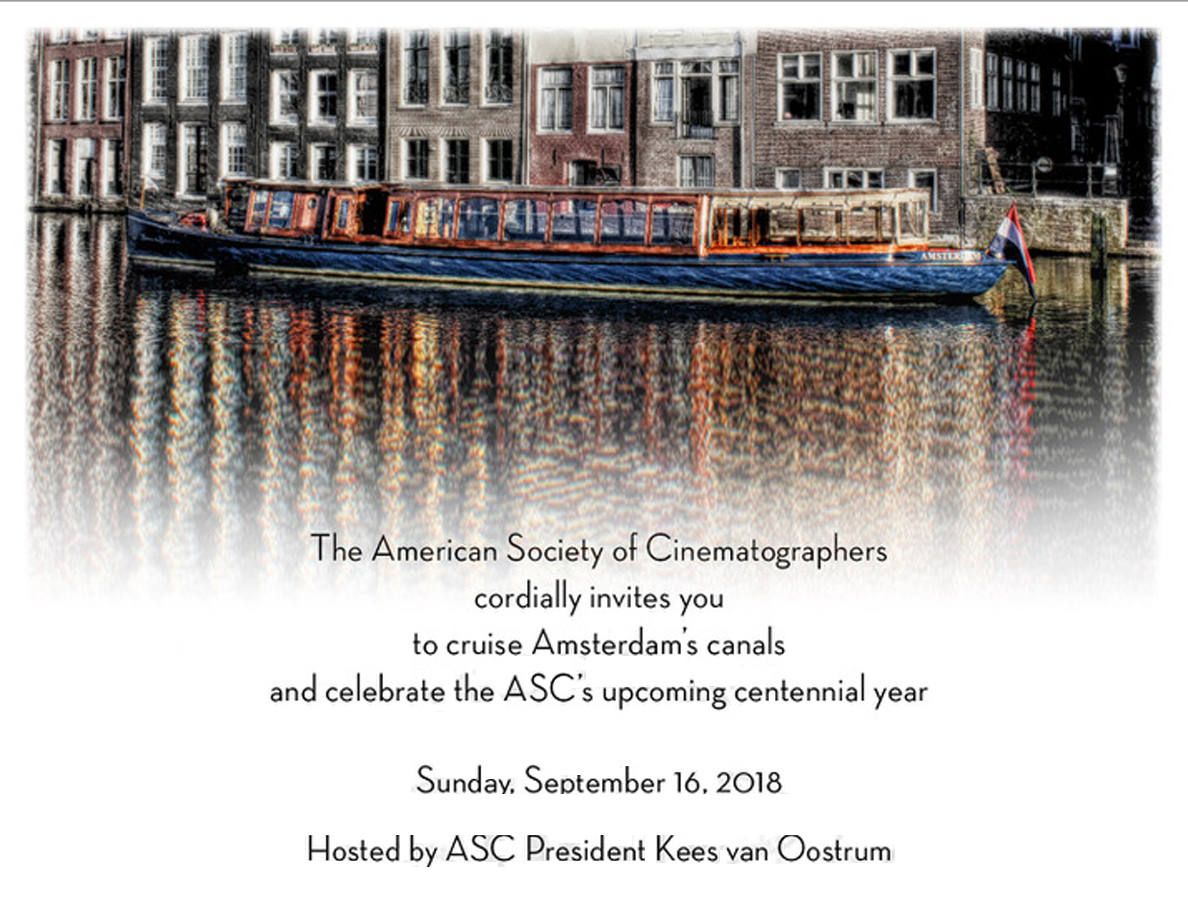

7. ASC Cruise

Next year, the ASC will celebrate its 100 years. In anticipation of the upcoming celebrations, ASC president Kees van Oostrum and vice-president Bill Bennett hosted friends and sponsors of the society on a scenic boat cruise from the convention center to the Eye Filmmuseum in Amsterdam harbor.

+++

PART ONE: IBC - 1. Sensors, Screens, Boyle, Bennett

+++

LINKS

LED

kinoflo.com: New Products

k5600.eu

Frequency Post

filmlight.ltd.uk

filmlight.ltd.uk: Baselight v5 pdf

VFX in Real Time

unity3d.com

VR

jannickemikkelsen.com: Lunar Window

Cinematic Art

eyefilm.nl: Ryoji Ikeda exhibition at the Eye Film Museum

Robby Müller Film

vimeo: Trailer for Robby Müller Living the Light

ASC

theasc.com: history of the ASC

+++

Unless otherwise indicated, all images are copyrighted by Benjamin B

Feel free to share images on the net with the following credit:

(credit Benjamin B, thefilmbook)

+++

+++