ICS 2018 — Academy of Motion Picture Arts & Sciences: ACES and More

Experts discuss their experience with using the ACES and detail latest methods to understanding the color characteristics of solid-state lighting sources.

For this unique four-day event, the American Society of Cinematographers invited peers from around the world to meet in Los Angeles, where they would discuss professional and technological issues and help define how cinematographers can maintain the quality and artistic integrity of the images they create.

Experts discuss their experience with using the Academy Color Encoding System and detail the latest methods to understanding the color characteristics of solid-state lighting sources — vital subjects in the current landscape of production and post.

On Tuesday, June 5, International Cinematography Summit attendees visited the Academy of Motion Pictures Arts & Sciences for discussions on ACES, the exposure index investigation project, spectral similarity index and a tour of the Academy’s imaging lab and Stella Stage.

Academy of Motion Picture Arts & Sciences president John Bailey, ASC — who is also an Academy Governor of the cinematographer’s branch and on the Science and Technology Council — greeted the group. “Good morning, fellow cinematographers from here and around the world, and welcome to the AMPAS Pickford Center for Motion Picture Study,” he said. “I greet you on behalf of my fellow Academy cinematographers to the Linwood Dunn Theatre, named after a pioneer in motion picture visual effects technology. This very building, like the ASC Clubhouse, is steeped in Hollywood history.” Bailey noted that Dunn — also a member of the ASC — received the first of his two Oscars for the Acme/Dunn optical printer, and that a restored example of the device is on display in the theater’s foyer.

In his current role, said Bailey, he’s been able to further explain cinematography to other Academy members and focus on the other crafts. “In the entire history of the Academy, only one other president, production designer Gene Allen, came from below the line,” he said, noting that he’s received significant support in his new role. “It’s a testament to the significance that cinematographers are getting today,” he said. “It’s a new found respect. We approach our work and our aesthetics and guiding principles, not based on narrow technology or equipment, but because we can express our thoughts and communicate on the set.”

He reported that he’s been able to spend this first year as AMPAS president engaging the 17 branches represented by the Board, most of which are crafts. He left the group with the comment that, “cinematographers today not only have the opportunity but the expectation that we’ll bring a global sense of what cinema is to our work.”

ACES: An Update on the Academy Color Encoding System

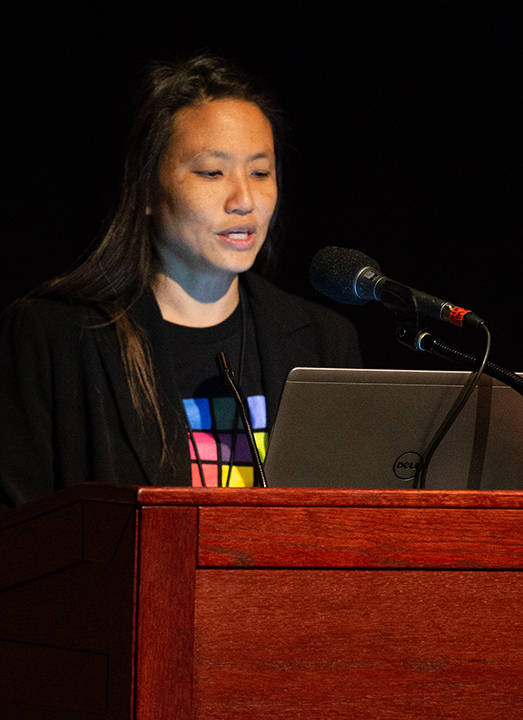

Universal Pictures vice-president of creative technologies Annie Chang, who is also the ACES Project chair, spoke about the background of and latest updates to the methodology. “ACES is a full set of data, metadata and file-format specifications for digital production,” Chang explained. “You have SMPTE standardized components to help you create the look you want to get, and it’s implemented in cameras, DIT carts, color correctors and VFX software. It’s a basis for clear color communication everywhere.”

Chang pointed out the key benefits of ACES for cinematographers. “It forces questions to be asked at critical points in the pipeline that is necessary for consistent color reproduction on every display,” she said. “What types of LUTs are you planning to use? Who’s making the LUTs? Are they useable for all displays? These questions are important and sometimes we forget to ask them ahead of time. It also maintains maximum image fidelity throughout the workflow and reduces pre-grade time, leaving more time for creativity. It also enables HDR and wide color gamut deliverables without committing to a proprietary technology, and provides a future-proof archival element.” She encouraged attendees to educate themselves at ACESCentral.com, a free online resource.

The hard part, added Chang, is implementation. “We have to test tools as we roll it out,” she said. To do so, the ACES committee — with the leadership of cinematographer Geoff Boyle, NSC — has been remastering The Troop, a film about the King of England’s royal horse artillery, to 4K UHD/HDR. “It’s a case study,” explained Chang. “We’re using it to test the archive process and tools, and help create best practices for archive, If you can recover the bits in 50 years or 100 years, you will know what the picture should look like because ACES IMF is a standard.”

Chang then called Boyle and cinematographer Andrew Shulkind to the stage. Boyle described how he wanted to use ACES on very low-budget films. “I used to have printer lights,” he said. “It dawned on me in 2005 when I found myself screaming at a DIT that it was my picture, that I had no control. In a totally digital world, I found myself not knowing what was right or wrong. I took a standard LUT to 12 different post houses, and they were all different. There was no standard.” He shot the feature Attack of the Adult Babies to beta-test ACES input and output transforms with FilmLight. “I generated a LUT at the beginning that combined the look of the film and the IDT/ODT of ACES, and we shot with that on all the monitors,” recounted Boyle. “Everyone saw the pictures through the LUT and ACES process. The final post was done in Quantel Rio, again in ACES, and it looked the same the whole way through the process. I have control again and that really excites me.”

“It goes through no DIT, no on-set colorist,” Boyle continued. “Just the input transform and the output transform – don’t touch it and it’s fine. It takes me back to shooting with Kodak gray scale. I wanted to get back to this, and that’s what I’ve done in this test.”

Shulkind, who discovered ACES through his colorist, noted the potential problem inherent in a digital era with a plethora of cameras and displays. “ACES has solved that problem,” he said. He described a film where he shot with a combination of Canon, Alexa and Red cameras. “I wanted 3200 ISO and T1 lenses and the tiniest amount of light,” Shulkind continued. “We shot an expanded range, but if you shoot that on set, it’s a super-lifted image. That’s where ACES came in in a very natural way. As ACES went through the whole pipeline, we looked at a grade that crushed everything back down.”

For a VFX-heavy film entitled The Ritual, Shulkind says the production shot in Romania, the post work was done in the U.K. and the VFX in Denmark. “We needed a protocol to communicate, and ACES gives us the biggest bucket possible,” he described. “Everything you want is inside that triangle.”

Chang asked Boyle and Shulkind if they had advice for cinematographers wanting to use ACES for the first time. “It’s as complicated as you want it to be,” said Boyle. “Post houses with secret sauce will complicate it.” Shulkin agreed. “I try to get the producer, DIT and colorist on board as soon as possible,” he said. “The only time it ever comes up again is in the final grade.” It requires communication to avoid surprises. “But as cinematographers, we have to say, ACES is something we want to do,” he said. “We’re the stewards of the image. In the future, with even more displays, this kind of process becomes even more important and our job of stewarding that look, no matter where it appears, also becomes more important.”

Boyle noted that although Attack of the Adult Babies was intended for Blu-ray and DVD release, it ended up premiering on an Imax screen. “The producers wouldn’t let me re-grade it for that screen,” he recalled. “But I was able to re-render it with another ODT. It wasn’t the best it could be on an Imax screen, but it was staggeringly better than a Rec709 going up. That’s an example of what ACES can do on a low-budget film.” He also said that, “colorists I worked with love that a lot of their work has been done for the. With ACES, their work is now just to do the creative stuff. It allows them to stay on a high level and start working with nuance.”

Chang asked about what the two cinematographers saw in the future. Shulkind described an 18-camera rig his company created for a 360-degree background plate for a Nike project. “We didn’t want to use GoPros because we needed dynamic range,” he said. “At this time, anything you’re shooting spherically relies on cameras with limited dynamic range, and that’s always key. As we move into these more interactive environments, that’s when ACES comes into play. Whatever the new imagery technology, we need to keep the same stewardship over the image.”

When it comes to commercials, added Shulkind, he’s rarely in the grade, which makes ACES important: “I use ACES on commercials whenever possible. It’s great at setting a baseline, and if you have a colorist you are not able to communicate with, it sets a bar.”

One ICS attendee asked how to convince an entire production to go with ACES, especially if multiple VFX houses will be involved. “It’s really easy,” said Boyle. “Just tell the producer it will save him money. And if the post houses can’t click on ACES, they shouldn’t be doing their bloody jobs to begin with.”

Chang reported that, when she worked at Marvel, its movies, which typically relied on 12 VFX houses on a given project, all use the ACES workflow. “VFX houses love ACES,” she said. “They know what they’re getting. They already work in a linear space, and being able to get the looks you put on set in a standardized fashion really helps the viewing platform for them.”

Shulkin’s strategy is to have a private conversation with the post producer, VFX supervisor and line producer, telling them all that ACES will save them money without costing anything up front. “They each have a vested interest in being paranoid,” he said. “But they have a vested interest in actually using ACES. Change is hard — it takes initiative to do something a little bit different. But ACES is replacing the standards we lost when we moved away from film.”

(You’ll find much more about ACES and its use on indie features in this related article.)

ACES: The Exposure Index Investigation Project

An ACES IDT task force, headed by Steve Yedlin, ASC; Technicolor vice president of imaging research and development Joshua Pines and Deluxe’s EFILM vice president of technology Joachim “JZ” Zell is taking a close look at the exposure index. “ACES provides input LUTs (IDTs), which make all cameras match in ACES, and output LUTs (ODT), which take care that all different monitoring devices match,” explained Zell. “The problem is that we left it to the camera vendors to build their own IDTs, with the result that cameras don’t match well enough in ACES.”

The Exposure Index project has built a test studio, dubbed the “Esmeralda Room,” at the AMPAS Pickford Center with a well-defined set-up that the team members use to compare cameras. ICS attendees had a chance to tour the space. “We will help camera vendors build better IDTs for all their exposure indexes,” said Zell. “We’ll start with an exposure index of ISO 800 and will go to all setting from 10 to 5000 ASA from here.”

According to Zell, in 2015, Otto Nemenz provided the Red Epic, ARRI Alexa, and Sony F55 and F65, which the team used to shoot the same Macbeth chart, with the same lenses. “Then we put the footage of these four cameras into four color correctors, switched it all to ACES, and got 16 different results,” he says. In 2016, they tested more cameras, including the Panasonic VariCam and an iPhone (although they did have problems mounting the common lenses on the device). One piece of good news: the output of the color correctors all matched. “All the IDTs, RRT and ODTs are implemented in the same way,” said Zell. “But the IDTs to match cameras could still be improved. They’re cousins but not twin sisters.”

In 2017, the team tested yet more cameras at Panavision in Woodland Hills, especially new versions of already-tested cameras, including Red Weapon and Monstro2, Canon C700, ARRI Alexa and Panasonic VariCam, Sony F55 and F65 and the Panavision DXL2. The team of ASC members put the cameras through their paces with consistent lighting and a single Panavision lens.

As the ICS attendees toured the Esmeralda Room, Zell revealed it was the first time it had been shown to members of the public. “Every camera manufacturer can come in and shoot under the same conditions,” he explained. A Photo Research Spectroradiometer verifies the room status, and an exposure meter is used to set the camera’s ISO, shutter angle and frame-rate setting. “The distance will always be the same — 12' between gray card and the focal point of the camera,” said Zell. “We got special instructions for the lens — when setting the stop, start with the iris wide open and close down to the marked T-stop. Don’t start closed and open to your stop because lenses are marked to account for backlash in the mechanism.

“What we then decide to do is change the shutter angle and frame rate to calculate the ‘over and under’ stops at 800 ISO,” Zell continued. “And everything was recorded Mit Ohne Sound [MOS]. The goal is to ensure that a properly exposed 18 percent gray card should result in ACES linear RGB values of 0.18, 0.18, 0.18.”

The next big step, said Zell, is to work on these cameras’ video taps. The Academy’s ACES technical lead Alex Forsythe joined Zell to answer some of the ICS attendees’ questions. One wanted to know about where ACES fits in the gap between the TV and film industries. Zell said that, “here at the Academy, we try to use the wording of the filmic cinematographer first, but the other group brings up a lot of interesting features, like waveform monitors and vectorscopes.” Forsythe noted that, “the difference between a video camera and a digital cinema camera is the processing or lack of processing. TV cameras hook up to a TV monitor so it does their processing and is finished. The digital cinema image is deliberately unfinished.”

Zell pointed out that the team has tested “a few cameras that do record Rec709 and RAW. We need to take care to look at both versions.”

Forsythe said that, “as part of ACES 1.0 [or ACES Next, as it’s being called], we’ll be including step-by-step documents on the usage of ODTs. We’re heading much more to user-oriented documentation that allows people to understand which ODT to use in which scenario and the intended output display it’s targeting.”

Maltz added that ACES also has a “big project going on with regard to open source software.” “It there is documentation you think could be improved, you can weigh in,” he said. “Go to ACES.Central — it’s not advertiser-supported. It’s a place to build community.”

Lighting: Spectral Similarity Index (SSI) Project

AMPAS Science and Technology Council co-chairs Joshua Pines and digital media advisor technology George Joblove head up the project to develop a Spectral Similarity Index (SSI), a new index expressly designed for the spectral evaluation of luminaires. With the advent of solid-state lighting (SSL) sources such as LEDs, existing indices such as CRI create unforeseen problems. The problem, explains the Council is that, “in contrast to the relatively smooth, continuous spectral power distributions of blackbody emission, tungsten incandescence, and daylight (and the ISO standardizations of these sources), many solid-state sources are characterized by peaky, discontinuous, or narrow-band spectral distributions … [which] can wreak havoc with color rendition.”

That’s because film and digital cameras are all “expressly designed to work with, and are indeed optimized for, standard tungsten and daylight.”

As Pines explained, “Lamp color quality can be described by the Color Rendering Index [CRI], but it only describes colors as seen by the eye, not as seen by the camera. The eye, film and digital cameras all see colors differently, and lamp spectrum affects skin tone, makeup, costumes, props and sets.”

SSL is popular for several reasons, one of them being that it uses much less energy, making it a “green” or eco-friendly product on set.

Pines showed charts that pictured how most white LEDs have poor color rendering because of the peaks and troughs in the spectrum. “Seeing color is normally considered to be a three-part system: light source, objects reflecting light which have their own reflective properties, and our eyes,” he said. “And we can mathematically describe all three of these. But, unfortunately, that’s not the world we work in. We have a six-part system: same light source and objects, but now we have a camera looking at the object instead of our eyes, and then color correction, some sort of image display, and then our eyes see that image on that display. So it’s much more complex. For the purposes of analyzing solid state light sources, we can limit this to image capture.”

Pines discussed the concept of metamerism— the idea that two objects can appear to be the same color even if they have different (non-matching) spectral power distributions. “Although they have different properties, under daylight, they’ll appear the same and different under fluorescent lights,” he described. “Two that look the same are a metameric pair, and when they don’t match, it’s a metameric failure.”

To the human eye, continued Pines, many different spectra are perceived as the same color. But the spectral sensitivities of the camera are different. “The bad news is that all those colors that look the same to our eye don’t look the same to the camera, and vice versa,” he said. “There’s a fundamental different: different cameras see things differently than our eyes and each other.”

Pines showed graphs of spectral power distribution of various LEDs “In one case, we can have a fabulous Macbeth chart shot under daylight, but under a particularly bad LED, it looks different,” he said. “Even when the gray scales are matched, we have different saturation characteristics. Although they can fix that in color correction, isolating specific colors in every shot is difficult.”

In 2010, cinematographer Daryn Okada, ASC ran into some early problems with LEDs and, under an early SSL project, shot tests with tungsten and LED sources, showing that film and digital cameras all have different spectral sensitivities and therefore varying color reproduction under different light sources. “Some smart people implemented a Color Predictor software tool, so we’re able to model spectral concentrations of light sources, put in different sensitivities such as a specific camera or human visual system and mathematically compute the differences between them,” said Pines, who added that Predictor software was used for much of the analysis.

Pines noted that SSL typically refers to LED lights, but can also refer to OLED. “The problem with SSL sources is that there is no standardized spectrum to use for predictable camera response,” he said. “Typical spectrum does not result in the same colors for different cameras, film or the human eye.” In addition to problems with the Color Rendering Index, another index, TV Lighting Consistency Index (TLCI) assumes a Rec709 broadcast camera rather than spectral sensitivities of cameras used for digital cinema and episodic image acquisition. “Unfortunately, TLCI assumes a Rec709 rendering rather than a P3 digital cinema color gamut,” said Pines.

To address the problems inherent in existing exposure indices, this project proposed a six-month project to look at existing metrics to see if they could come up with another numbering system that would be useful. That was the beginning of the Spectral Similarity Index. “The SSI is an index for evaluating luminaires used for motion picture photography but also for TV and still photography and human vision,” said Pines. “A single number on a scale of 1 to 100 indicate similarity of spectrum of a test illuminant. It can be thought of as a ‘confidence factor’ that rendered colors will be as expected.” Values above 90 should be very good, added Pines, between 80 and 90 pretty good, with problems at any number 60 or below. “Predictability is what you get,” he noted. “Low-energy spikes and noise are smoothed out in the index calculation.

“We consider this a carrot and stick to manufacturers, since a low SSI number wouldn’t be good for them. If they score high, they’ve actually gotten their SSL source to be like continuous light sources. It’s very hard to game this system, where as CRI, you can construct something that passes its tests but is a horrible light source.”

But a low SSI value doesn’t mean that the color rendering for a particular camera will be bad, he continued. “A high value for SSI just means that it is very likely you don’t need to worry about color rendering — the colors you see will match the colors seen by most digital cameras,” he said. “But SSI doesn’t use any spectral sensitivity assumptions. The algorithm can be used against any reference spectrum.”

The next step of the project, said Pines, is to talk to lighting manufacturers. “We’re actively encouraging spectrometer manufacturers to implement SSI to enable broad field use,” he said. “Several have expressed interest, so please encourage them. To validate SSI for production, we propose international standardization.”

More information will appear at www.Oscars.org/ssi and the project team can be reached at [email protected]. SSI is also fully described in a 2016 SMPTE presentation (accessible to SMPTE and IEEE members here).